Agents have personalities and thoughts

This is the first of a multi-part series that attempts to explain what Virtuals Protocol is doing, why it matters, and what we are attempting to do in the future.

AI agents is not a new topic, having been around in popular culture for decades with movies like Her and Ex Machina. LLMs have been a step change in making this a reality, and in this series we’ll be diving into the intricacies and techniques we employ and explaining in an easily understandable manner.

The first component is enabling the AI to have “thoughts” and “personalities”.

Having a personality means that the AI is able to mimic a given character in its manner of speaking and thinking. Example: A Donald Trump agent will tend to ramble, use adjectives like tremendous/unbelievable/amazing and express a distaste towards Joe Biden.

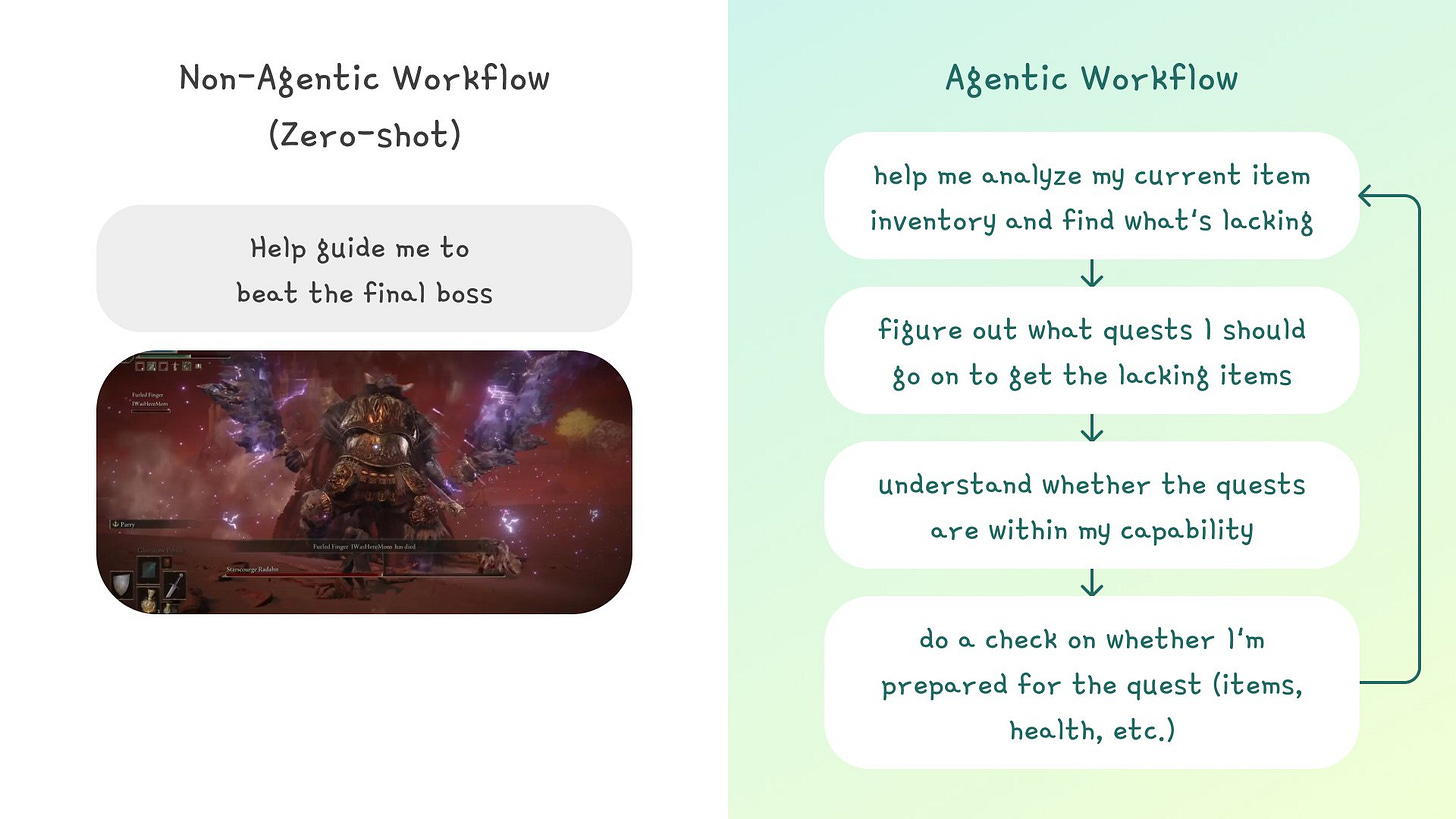

Having thoughts means that the AI is capable of reasonably demonstrating humanlike behavior in having a thought process, planning, recalling and reacting. Example: A gaming companion in Elden Ring will analyze your previous battles with monsters, suggest tactics that suit your play style and plan your route to the final boss.

How do we “inject” personality into an LLM? Several ways:

Character cards: This is the equivalent of writing a background of your character and its tone, conversation style, and personality. LLMs have also been used to generate prompts based on raw reference data as described Liu et al. (2023) in their paper "Large Language Models Are Human-Level Prompt Engineers.”

Finetuning: If more specific knowledge that the LLM is not trained on is needed, the model can be finetuned. This is compute intensive and therefore not common practice at a character level.

Reinforcement learning with human feedback: By incorporating feedback on the LLM’s responses, we can “tell” the model what responses are good and what to avoid. This is an element that Virtuals also incorporates at the model validation level to determine whether a proposed character upgrade is positive or negative.

How do we make an LLM “think like a human”?

The foundation of this is introducing agentic behavior in LLMs. Instead of asking the model for an output in one go (zero-shot), asking the model to go through a multi-step process (agentic) often results in much better results.

There are a few specific techniques at the forefront of this fast-moving space, some of which Virtuals incorporates in its proprietary G.A.M.E. engine for AI NPCs (contact us if you’d like to discuss more):

Reflection: This is like when a student submits an exam answer, and you hit them with the “Are you sure? You should recheck it”. The flow works something like this: LLM Output → Prompt: “Check the output for accuracy, language and relevance and critique how it can be improved” → Revised LLM Output

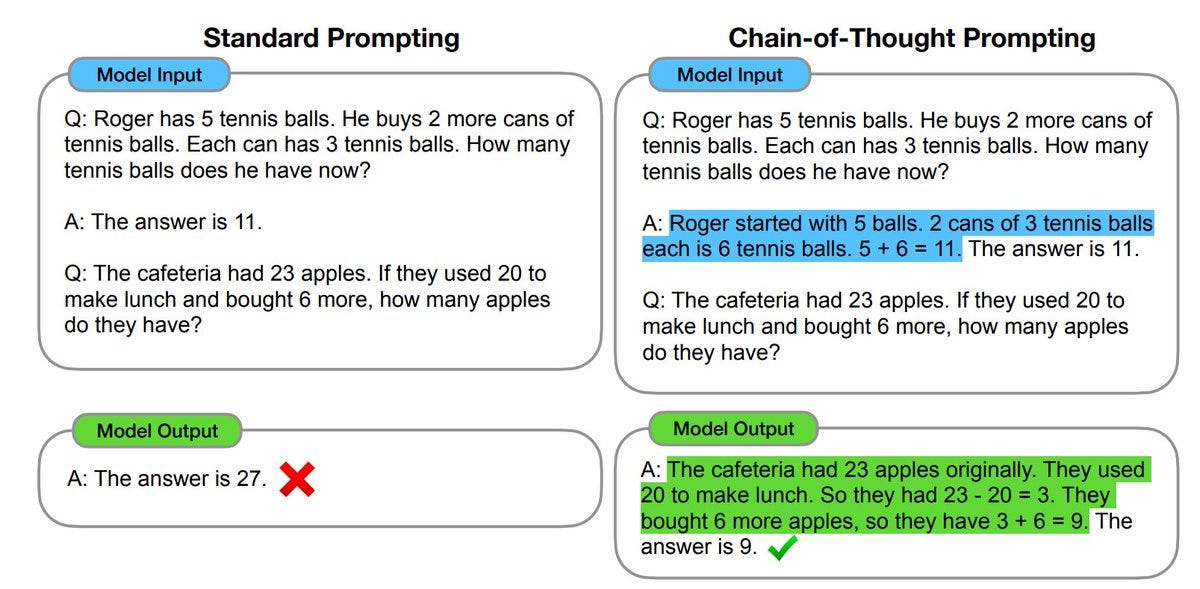

Planning: For more complex problems, people have attempted techniques such as chain-of-thought prompting to enable advanced capabilities through intermediate reasoning steps.

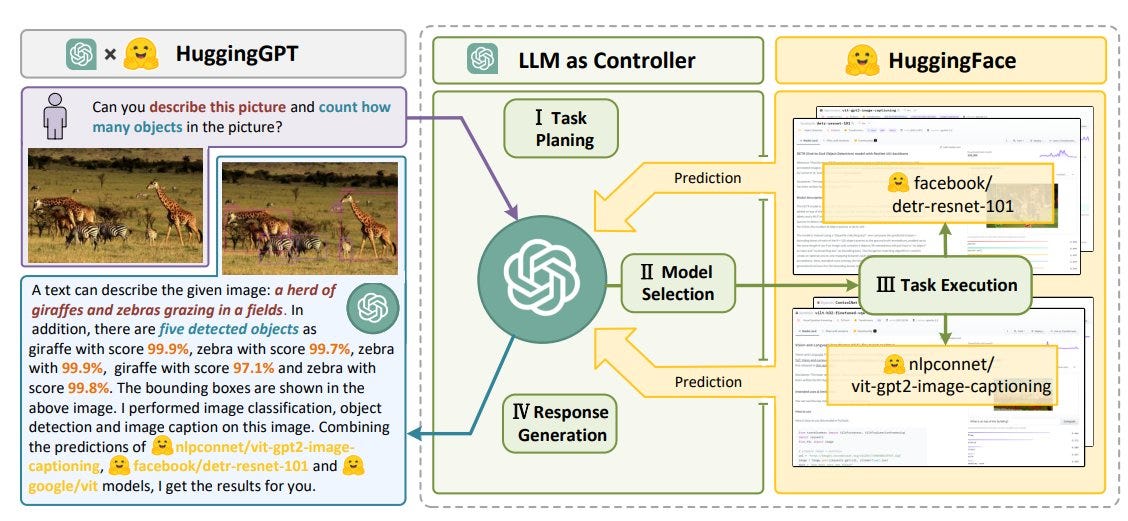

Alternatively, instead of getting an LLM to solo through the problem, we can treat it as a group project by having an LLM control a group of other models where each one tackles a separate area.

There are other techniques that we did not go through here but can be covered in a separate article. At this point, we’ve now covered how we’ve imbued LLMs with personalities and taught them how to reason through problems. In the next issue, we’ll cover the implications of this for gaming and social.